The growing use of tablets, smart phones and cloud services is making it more complicated for IT organizations to manage user authentication and authorization to enterprise resources – as if it wasn’t difficult enough.

Consequently, the market for technology that provides secure single sign on is heating up. I delved into growing identity management as a services (IDMaaS) landscape a few months ago (see Going Cloud: Identity Management as a Service). In recent weeks, a number of companies have moved to up the IDMaaS ante including Centrify, Microsoft and Okta. And this week IBM rolled out an upgrade to its Tivoli Security Access Manager, with the launch of ISAM v. 7.0.

There’re a slew of other players including CA, Intel and its McAfee division, Ping Identity, SailPoint, Simplified, Symantec and VMware, among others that have furthered their push to advance IDMaaS in 2012 and will undoubtedly continue to do so in the coming year.

Looking at the latest developments alphabetically, Centrify earlier this month launched DirectControl for SaaS, which authenticates users via their Active Directory credentials to access software as a service-based solutions. Among those SaaS offerings Centrify supports include Box, Google Apps, Marketo, Microsoft’s Office 365, Postini, Salesforce.com, WebEx, Zendesk and Zoho.

Centrify designed DirectControl for SaaS to allow single sign on access to these and other SaaS with a user’s Active Directory credentials, explained Centrify CEO Tom Kemp. Users can access any resource tied to Active Directory from traditional mobile PCs as well as Android and iOS-based smartphones and tablets whether they’re company issued or owned by employees.

Kemp said Centrify’s new offering doesn’t require changes to Active Directory or to endpoint security systems. “Our cloud offering is in effect an identity bridge to a customer's Active Directory,” Kemp said.

IBM’s new Tivoli ISAM v7.0 tackles IDMaaS from a slightly different perspective. Like Centrify’s offering, Big Blue said it provides context-aware management for mobile devices. But the new ISAM is helps centrally manage rights throughout the policy lifecycle from file creation to publishing, while enforcing compliance requirements.

In addition to controlling access to in-house systems, apps and data, the new ISAM release provides federated single sign on to various cloud service providers.

Looking to extend its Active Directory technology to the cloud, Microsoft is expected to launch Windows Azure Active Directory at some point next year. While Microsoft hasn’t said when it will be generally available, the WAAD is now available for beta testing.

Active Directory made its move to the cloud in 2011 with the launch of Office 365, when Microsoft permitted customers to federate their Active Directory domains to the service. Now users’ Active Directory credentials can be found in a Microsoft’s other cloud offerings including the online versions of its Dynamics applications and Windows Intune.

The next step for Active Directory’s cloud migration is to Microsoft’s Windows Azure service. In beta now, Microsoft last month said it will over access control in Windows Azure Active Directory (WAAD), free of charge upon release.

“If you’re building a service in Windows Azure, you can create your own tenant in Azure and create users and we let you manage those users, who can be connected to your cloud services,” Uday Hegde, principal group program manager for Active Directory at Microsoft told me earlier this month. Furthermore, Hegde said Windows Server customers running Active Directory on premise can connect to WAAD and avail all its features.

Microsoft is betting its large customer base running Active Directory will propagate it to WAAD. It stands to reason those who move Windows Server apps to Windows Azure, or build new ones will provide authentication services through WAAD.

Yet there’s a lot of money riding on IDMaaS alternatives. Okta earlier this month received a cash infusion of $25 million in Series C funding led by Sequoia Capital, bringing the total amount it has raised to $52 million.

Okta is using Active Directory and WAAD APIs to enable single sign on to SaaS and traditional apps. “A CIO wants to have one single identity system that connects them to these different applications,” said Okta VP Eric Berg.

Indeed I've heard that refrain for many years. We’ll see if the latest offerings, and a number of others, deliver.

Posted by Jeffrey Schwartz on 12/20/20120 comments

It looks like CA Technologies is going to step up its emphasis on cloud computing based on yesterday's announcement that it has appointed former Taleo CEO Michael Gregoire.

Gregoire will replace existing CEO William McCracken effective Jan. 7, CA announced yesterday. McCracken, 70, is retiring after a three year stint as CEO. Like his predecessor, John Swainson, McCracken spent more than three decades at IBM. Though former CEO Swainson retired in early 2010, he re-emerged in February as president of Dell's newly formed software group, where he recently engineered the $2.4 billion acquisition of Quest Software, a CA rival.

The choice of Gregoire appears to suggest CA's board wants to accelerate its cloud push. As CEO of Taleo, provider of software as a service talent management software, he helped engineer the sale of Taleo to Oracle this year for $1.9 billion. Gregoire's credential also include stops at CRM and ERP software supplier Peoplesoft, also acquired by Oracle, and EDS.

Now Gregoire, 46, is moving from Silicon Valley to Long Island and it will be interesting to see what he has in store for the longtime supplier of mainframe systems management vendor founded by Charles Wang. Of course CA has diversified quite a bit and over the past few years has emphasized management of cloud based systems and apps with a number of small acquisitions. But overall, while the company is known for a solid though highly diversified line of enterprise systems and cloud management software it has enjoyed moderate growth.

The board's decision to tap a Silicon Valley veteran rather than go back to the well of IBM execs, could suggest a more aggressive growth posture for CA. The question is, will Gregoire look to let shareholders cash out by selling CA in its entirety or in pieces? Or will he look to build up and diversify CA's cloud portfolio?

"I believe CA Technologies has a compelling value proposition, a strong reputation and a growing relevance for customers, software engineering, and partners," Gregioure said in a statement. "It is clear that CA Technologies is well-positioned to lead the industry as companies find it more critical than ever to manage and secure their IT environments in the cloud and efficiently provide business services that enable them to win in the marketplace."

It stands to reason Gregoire may look at CA with a fresh set of eyes. "He brings a different perspective to CA that they haven't had in a chief executive," Matt Hedberg, an equity analyst with RBC Capital Markets told Newsday yesterday (subscription required). "It's certainly going to help their long-term vision and outlook."

Posted by Jeffrey Schwartz on 12/13/20120 comments

Citrix wants to ensure its place in managing employee-owned tablets, PCs and smartphones as well as cloud-based file sharing with the planned acquisition of leading MDM supplier Zenprise.

Citrix announced the agreement on Tuesday, for undisclosed terms. Citrix plans to integrate the Zenprise MobileManager, Zencloud and Zensuite offerings with its own MDM solution CloudGateway and the Me@Work portfolio, which includes the respective GoToMeeting and ShareFile cloud-based conferencing and document storage services.

"Consumerization and BYO have given rise to very difficult challenges for businesses in enabling a productive, mobile workforce while still maintaining tight controls over company information," said Sumit Dhawan, Citrix vice president and general manager of mobile solutions. "Zenprise was a clear choice for Citrix, with its leading MDM product, an experienced team, a history of innovation, and a footprint on more than one million devices. With a complete Citrix enterprise mobility solution, customers have all the necessary pieces to manage and secure mobile apps, content and devices."

When Citrix launched CloudGateway last year, it described it as a tool to manage and securely distribute mobile apps in addition to managing PCs, Web and software as a service (SaaS) apps. It remains to be seen how Zenprise tools are integrated with CloudGateway but Zenprise has focused on MDM for nearly a decade and is seen as a leader by analyst firms Forrester and Gartner.

Presumably it means Zenprise will also provide management of Citirix's various cloud offerings including its new ShareFile. But Zenprise's flagship MobileManager software allows IT to control the deployment, configuration, provisioning based on enterprise policies, security (including the blocking of data synchronization with public cloud services such as iCloud), monitoring and decommissioning (wiping) of devices.

For those that don't want to deploy Zenprise MobileManager on site, the company offers Zencloud, which promsines 100 percent uptime SLAs and is housed in SSAE 16/SOC1, FISMA Moderate compliant facility.

Posted by Jeffrey Schwartz on 12/06/20125 comments

VMware and its corporate parent EMC next year will form a new business entity, called Pivotal, that brings together the two companies' respective cloud platforms and products that enable the processing of big data. It's not clear whether the companies are spinning these assets off outright or how they will structure the new organization. VMware said it will formally announce the business structure next quarter. So far, we do know that the unit will be led by Paul Maritz, the former VMware CEO and current EMC chief strategy officer.

Pivotal will aim to provide a common entity for developers to build applications that run on VMware's various platform as a service (PaaS) offerings as well as software that analyzes huge volumes of data. Pivotal is scheduled to begin operations in the second quarter of next year.

Approximately 600 VMware employees and 800 from EMC will be assigned to Pivotal, which will include the Pivotal Labs, the provider of agile software development tools it acquired in March that lets developers build apps that can scale to cloud infrastructures using big data. Pivotal will also include EMC's Greenplum data warehouse appliance business.

VMware's contribution will include its vFabric (including Spring and Gemfire), Cloud Foundry and Cetas. It appears the vCloud Suite will remain part of VMware, as indicated in a blog post published Tuesday by senior VP of communications Terry Anderson.

"The resulting Pivotal Initiative solutions will be optimized for the VMware vCloud Suite, helping to ensure that customers benefit from the best cloud architecture available, top to bottom," Anderson said. "Simultaneously, VMware will continue to drive application-aware innovations into its core platform, ensuring best-in-class performance of any application when deployed onto the VMware vCloud Suite."

The announcement and rational of the move were vague, though numerous reports have speculated that the company has considered some form of spinoff such as its open source cloud PaaS venture Cloud Foundry. VMware is not commenting beyond Anderson's blog post.

"There is a significant opportunity for both VMware and EMC to provide thought and technology leadership, not only at the infrastructure level, but across the rapidly growing and fast-moving application development and big data markets," she noted. "Aligning these resources is the best way for the combined companies to leverage this transformational period, and drive more quickly towards the rising opportunities."

Forrester Research analyst Dave Bartoletti said in a blog post IT pros should welcome the move, which will refocus VMware on the datacenter and on furthering its push into software defined network virtualization.

"This move helps to end the cloud-washing that's confused customers for years: there's a lot of work left to do to virtualize the entire datacenter stack, from compute to storage and network and apps, and the easy apps, by now, have mostly been virtualized," Bartoletti wrote. "The remaining workloads enterprises seek to virtualize are much harder: they don't naturally benefit from consolidation savings, they are highly performance sensitive and they are much more complex."

Analyst James Staten, Bartoletti's colleague at Forrester, noted in his blog by moving their cloud platform offerings to a separate business, EMC and VMware will be in a better position to appeal to developers. It's "way too soon to speculate on the end results but this could help EMC play a significant role in cloud development services," Staten said. "Hopefully this new group will focus on cloud-based delivery and not build its business model around on-premise software license sales."

The news came just one day after VMware released new tools to ease the procurement and reach of cloud apps using its vCloud Suite. The company's vFabric Application Director 5, announced earlier this year, gains support beyond traditional VMware environments, notably Amazon Web Services EC2 public cloud and Microsoft's Hyper-V virtual machine platform.

The latest version of vFabric Application Director is aimed at easing the deployment of hybrid cloud applications via certified VMware-approved templates and various tools including middleware, data management and security software.

"It allows us to take the exact same [infrastructure] blueprint, deploy it onto vSphere, vCloud or Amazon EC2 without having to change anything," explained Shahar Erez, VMware's director of applications management products. "This gives organizations the flexibility to leverage their blueprint across clouds without being locked in."

To provide these various blueprints, reference architectures and OS-loaded templates for vFabric Application Director, VMware launched its Cloud Applications Marketplace. Erez said the marketplace already hosts 100 downloadable solutions from 30 ISVs and systems integrators. Among them are solutions from Bluelock, Cognizant, Couchbase, Jaserpsoft, Puppet Labs, Radware, Riverbed and SugarCRM.

"You would find load balancers, firewalls, WAN accelerators SSL accelerators, applicaiton servers, databases, message queues and memcaches," he said, as well as "applications for blogging, content management, bug tracking and cloud provisioning blueprints available with a single click."

VMware this week also released its vCenter Operations Management Suite 5.6, which adds application performance and configuration management capabilities and is designed for rapid configuration of virtual machines, Erez said. VMware is offering the performance management capabilities of the Operations Management Suite as a separate download with all versions of VMware vSphere.

Posted by Jeffrey Schwartz on 12/05/20120 comments

At its first-ever customer and partner gathering, Amazon Web Services officials this week were loud and clear in their assertion that corporations and the public sector should hasten their transition from operating traditional datacenters to instead running their infrastructures in Amazon's rapidly growing cloud service.

While Amazon is already the leading cloud infrastructure provider, the company used its re:Invent conference in Las Vegas to crank up the volume on its message that its vast array of cloud-based compute, storage and application services offer unlimited capacity and elasticity, coupled with its infrastructure-on-demand architecture. It showcased many customers that are already doing so such as Netflix, Nasdaq and the team of NASA and Jet Propulsion Laboratories, along with 300 government agencies, as well as newer companies such as Dropbox, Pinterest and Spotify.

By shifting more workloads to Amazon's cloud services, company officials emphasized that organizations can lower their IT costs by reducing or eliminating capital expenditures and moving to an operational expense model. Such a shift will also let them be more agile to the needs of the business, the officials emphasized.

Of course this pitch is hardly anything new to anyone who has followed the growth of cloud computing over the past five years. But the typically low-key Amazon came out swinging at re:Invent in an effort to leave little doubt that its cloud operation is not just a side business of the much more prevalent Amazon.com online retail site.

In fact, Amazon officials suggested they are aiming to marginalize what they called "old guard technology companies" like EMC, Hewlett Packard, IBM and Oracle, among others. "The economics of what we're doing are extremely disruptive for old guard technology companies," said Andy Jassy, senior VP of Amazon Web Services, in yesterday's opening keynote address. "These are companies that have lived on 60 to 80 percent gross margins for many, many years, and in fact they set expectations that that's what they are doing. This is what they communicate externally."

One might dismiss such statements as rhetoric except Amazon has a track record of putting the squeeze on its rivals. Its online retail operation has redefined the business models of such players as Barnes & Noble and Best Buy, while contributing to the demise of Borders, Circuit City and CompUSA .

"High-margin businesses have been around forever in lots of industries and they are obviously a very valid and successful business model," Jassy said. "It's just not ours. It is radically different to run 60 to 80 percent gross margin business than a high-volume, low-margin business. "The vast majority of computing over the next 10 years, it stands to reason, is going to be a high-volume, low-margin business."

Forrester Research analyst James Staten, who was at re:Invent, said in a telephone interview that Amazon's volume business model calls for 10 to 12 percent margins. "If you look at all the other competitors out there, they are coming from high-margin businesses that are not high volume, and they are trying to see how to navigate or bring into their business a low-margin, high-volume business as a compliment and that's just really tough," Staten said. "Most of these guys are trying to figure out how they can do that or if they need to do that or how fast they need to do that."

Jassy also made light of private and hybrid clouds suggesting they are merely efforts by entrenched IT providers to preserve existing business models, while failing to offer the benefits of operating IT as a service. "Why are these old guard technology companies so desperately trying to get you to buy the private cloud?" he asked. "Why? I think the answer is, the economics of what we're doing are extremely disruptive for old guard technology companies."

As Amazon's cloud business grows, company officials said the economics of offering its services expands by virtue of the fact that they are adding more capacity and therefore able to offer it at lower cost. For example, since launching its Simple Storage Service, known as S3, the company has reduced the price 24 times, the latest taking effect yesterday. The company slashed its storage rates on average 25 percent. "They've made it so storing almost anything in Amazon is cheaper than storing it in your own datacenter," Staten said.

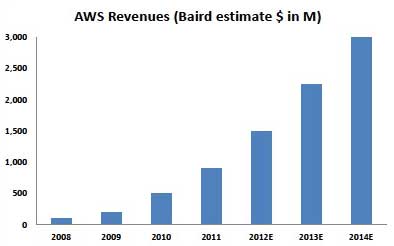

Amazon is regarded as the largest provider of cloud infrastructure services. While it doesn't break out the revenues for its cloud business, the investment research firm R.W. Baird estimated in a research note this week it will be a $1.5 billion business this year. Within two years, that business will double to $3 billion, R.W. Baird has forecast.

|

Figure 1. AWS financial forecast from R.W. Baird. Source: R.W. Baird. |

In his keynote today, Amazon CTO Werner Vogels echoed Jassy's sentiment that the company is aiming to change the economics of datacenter operations with its cloud offerings. "Being constrained by hardware makes it impossible to do the things you want to do as a business," Vogels said.

Still, despite growing use of cloud computing, Amazon and other cloud providers still face a skeptical audience of IT decision makers, Forrester's Staten warned. "The biggest problem they face is many enterprises only know Amazon as an online retailer," Staten said. "They have significant amount of skepticism about this non-enterprise player being able to support their enterprise game."

Posted by Jeffrey Schwartz on 11/29/20120 comments

Less than seven years ago, Amazon Web Services disrupted traditional datacenter computing with its cloud-based infrastructure services, allowing enterprise customers to provision compute and storage and pay based on usage without having to make capital outlays for hardware or software. Many who have moved to this model of paying for IT infrastructure as an operational expense have enjoyed considerable reductions in capital expenditures.

Now, Amazon is looking to similarly upend the way organizations deploy data warehouses.

Kicking off its first-ever partner and customer conference on Wednesday, Amazon launched a cloud-based data warehousing service called Redshift. Amazon says the service will substantially reduce the cost of deploying data warehouses by eliminating the need to acquire conventional software and hardware provided by the likes of EMC, Hewlett-Packard, IBM, Microsoft, Oracle, SAP and Teradata.

In his opening keynote address at the company's re:Invent conference in Las Vegas, Amazon Web Services Senior VP Andy Jassy cited an IBM-commissioned report that found typical data warehouse installations can cost anywhere from $19,000 to $25,000 per terabyte per year. Using reserved data warehouse instances on Amazon's forthcoming Redshift, the average annual cost per terabyte will amount to less than $1,000 per TB year, according to Jassy.

"It allows you to easily and rapidly analyze petabytes of data. It's about a tenth of the cost of traditional data warehouse solutions. It automates the deployment and it works with the popular business intelligence tools," Jassy told 6,000 attendees present at re:Invent and 12,000 registered viewers (including yours truly) of the live webcast.

Customers can choose from either 16 TB nodes or 2 TB nodes and can configure up to 100 nodes per hour up to 1.6 petabytes starting at 85 cents per hour for a 2 TB node. The data is stored in columnar format, Jassy said, which means that the I/O moves much more quickly and queries of data will render much faster than a typical data warehouse solution. The service supports queries with standard SQL, JDBC and ODBC, he noted.

The parent company of AWS, the flagship Amazon retail site, has been testing Redshift for several months. Jassy said the group took 2 billion rows of data and ran six of its most complex queries typically performed in its existing Netezza (now part of IBM) data warehouse. On two 16-terabyte nodes of Redshift, it cost $3.65 per hour equating to $32,000 per year. "Instead of spending millions of dollars, they spent $32,000 a year and ended up with 10 times faster queries," Jassy said.

"Some multi-hour queries finish in under an hour, and some queries that took five to 19 minutes on our current data warehouse are now returning in seconds with Amazon Redshift," said Erik Selberg, manager of Amazon.com's data warehouse team, in a statement.

Redshift's underlying data warehouse engine is powered by ParAccel, a venture-backed company with a deep bench of data warehousing veterans that offers its own high performance analytic database. Initially Redshift will support BI tools from Jaspersoft and MicroStrategy but Jassy said it will also support other leading tools including Cognos from IBM and BusinessObjects from SAP. Early customers that are already participating in a private beta are Flipboard, the team of NASA/Jet Propulsion Labs, Netflix and Schumacher Group.

The service is available now for a limited preview but Amazon is targeting early next year to make Redshift commercially available.

So will Redshift take a bite out of the traditional data warehousing business? That remains to be seen but if Amazon delivers the price-performance that it's promising, it'll offer a compelling alternative, particularly to organizations that can't afford a traditional data warehouse today that have the need to analyze information.

"It doesn't necessarily mean customers are going to chuck the data warehouses they've already got," said Forrester Research analyst James Staten in a telephone interview. "If you've already go one you've already sunk that cost in. But if you're going to have to double or triple that data warehouse in size, it's really going to be hard to justify the cost of keeping them on premises."

That said, despite the rapid growth of the cloud business of Amazon and other providers, many organizations remain reluctant to move mission-critical or sensitive data off premise and that could certainly impact how quickly data warehousing and big data analytics moves to the cloud.

Jassy made clear Amazon will continue its path to be a disruptive force in datacenter computing and I'll spell that out in a post on Thursday following Amazon CTO Werner Vogels' keynote.

Posted by Jeffrey Schwartz on 11/28/20120 comments

In its latest bid to unify converged datacenters with cloud infrastructure and services, Cisco today said it has agreed to acquire Cloupia for $125 million.

Cloupia, founded in 2009, is based in Santa Clara, Calif. and offers software that lets organizations administer traditional datacenter infrastructure with cloud-based infrastructure. Cloupia's software provides a common interface to manage and monitor infrastructure across physical, virtual and cloud environments and is aligned with key providers.

In addition to an existing alliance with Cisco, Cloupia has partnerships with Amazon Web Services (AWS), EMC, Hewlett Packard, NetApp, Rackspace and the Virtual Compute Environment, a company formed by Cisco and EMC. Cloupia's flagship product, the Unified Infrastructure Controller, lets organizations build private clouds and manage hybrid infrastructures.

Hilton Romansk, Cisco's vice president of business development, said in a blog post, the move builds on the company's effort to enable enterprise customers to manage its Unified Computing System and its Nexus switches and other third party cloud infrastructure and services. In short, Romansk explains how the deal accomplishes that goal:

Cisco's acquisition of Cloupia benefits Cisco's Data Center strategy by providing single "pane-of-glass" management across Cisco and partner solutions including FlexPod, VSPEX, and Vblock. Cloupia's products will integrate into the Cisco data center portfolio through UCS Manager, UCS Central, and Nexus 1000V, strengthening Cisco's overall ecosystem strategy by providing open APIs for integration with a broad community of developers and partners.

Similar to previous acquisitions in cloud management, such as Tidal, LineSider and NewScale, the acquisition of Cloupia also complements Cisco's Intelligent Automation for Cloud (IAC) solution.

In short, he concludes the acquisition of Cloupia will help Cisco provide intelligent network orchestration and management by bridging traditional datacenters and cloud infrastructure. It aims to offer the benefits of cloud automation by providing a view of an organization's entire compute, network, storage, VM and operating system resources.

Posted by Jeffrey Schwartz on 11/15/20123 comments

Should organizations scrap their SharePoint deployments in favor of Office 365 or some other instantiation of Microsoft's collaboration platform that's subscription-based or hosted elsewhere? Microsoft left little doubt about the answer to that question as it showcases SharePoint 2013 and the SharePoint online upgrades to its Office 365 service this week at the annual SharePoint Conference in Las Vegas.

"We really recommend moving to the cloud for the best experience overall," said John Teper, the Microsoft corporate vice president known as the "father of SharePoint," speaking in his opening keynote at the annual SharePoint Conference on Monday. "We understand not everyone is there yet. This will take time. People who want to run their own servers, that's great. We have the best server release we've ever done in SharePoint 2013. The thing you should take away from our cloud focus is all we've learned about optimizing the system and deployment and monitoring, we've put into the server product and put into the deployment guidance."

SharePoint 2013's "Cloud-First" model follows in the footsteps of Microsoft's promise that it will deliver infrastructure software and applications as a cloud service first or simultaneously with the release of the on-premise version of its key products. That came to life with last year's CRM Online Dynamics CRM duo. Now Microsoft is employing the same approach with the latest version of SharePoint Online in the Office 365 service and SharePoint 2013.

One of many distinctive new cloud features in SharePoint 2013 and SharePoint Online is the new SkyDrive Pro, an evolution of the SharePoint Workspace. SkyDrive Pro raises the bar in synchronizing content between SharePoint Sites and workers' various devices. SkyDrive Pro is modeled after the consumer-based SkyDrive service, except it's built into SharePoint, which allows IT organizations to manage it.

Experts are predicting more rapid than usual uptake for the new release of SharePoint and Office 365, primarily due to the major overhaul of the SharePoint experience, which brings enterprise social networking to the forefront.

A Forrester Research poll of 153 clients who already have SharePoint found 68 percent of respondents planned to introduce the new version within two years (37 percent within the first year and 31 percent within the second). What's interesting about that finding is 70 percent of that sample said they already have upgraded to SharePoint 2010, which is unusual since organizations typically skip subsequent releases to amortize their investments.

"This is conjecture here but it could be around the social experience," said Forrester analyst Rob Koplowitz in an interview. "The feedback on the social facilities in SharePoint 2010 was pretty dismal. That might be the driver but others include the need for improved document and records management. Also, it could be they're trying to move to a more stable development environment."

Speaking of social networking, that's where Yammer comes in, the popular social networking company Microsoft just acquired for $1.2 billion. Microsoft announced it's bundling the popular cloud-based enterprise social networking service, into SharePoint 2013 and Office 365 in addition to offering it as a standalone offering and plans further integration.

In the annals of Microsoft's cloud transition, 2010 will be remembered as the year CEO Steve Ballmer proclaimed the company is "all-in." With the revamp of SharePoint and Office, we may get our biggest sense yet how many Microsoft's customers are all-in.

Posted by Jeffrey Schwartz on 11/15/20120 comments

Cloud Foundry, the VMware-sponsored open source initiative to provide interoperable platform as a service clouds, moved to simplify its effort to provide PaaS interoperability.

That interoperability comes in the form of its new Cloud Foundry Core, a framework aimed at enabling developers to build portable applications across PaaS-based clouds and includes an open mechanism to validate that an app is portable along the lines of the Cloud Foundry specs. It allows developers to enter an API endpoint to its Cloud Foundry Core-compatible.

It provides common capabilities based on the Cloud Foundry Core Definition (CFCD), which includes common runtimes and services based on open development standards such as Java, Ruby, Node.js, MongoDB, MySQL, PostgreSQL, RabbitMQ and Redis.

In connection with the release of Cloud Foundry Core, five cloud providers launched compatible instances: AppFog which provides a PaaS cloud deployment architecture; CloudFoundry.com, VMWare's public instance of Cloud Foundry based on its vSphere infrastructure; Micro Cloud Foundry, the tool that lets developers run Cloud Foundry instances on their client devices; Tier 3, an enterprise infrastructure as a service (IaaS) provider and Uhuru Software, which allows developers to run their .NET and SQL Server applications on Cloud Foundry.

Posted by Jeffrey Schwartz on 11/15/20123 comments

Like millions of people in the Northeast, I am hunkering down as Hurricane Sandy is living up to its promise as the worst storm to hit this region in decades.

We have been told to expect power outages of anywhere from seven to 10 days -- not a prospect I am looking forward to, if that prediction comes true. Call me a prima donna, but I'm not one who enjoys roughing it, even though as a child I did my share of camping. But that was a long time ago.

I have done everything I can do to prepare for this storm. I stocked up on batteries early on (you can't find D batteries anywhere now to save your life), water, non-perishable food and an extra bottle of wine. And because business doesn't stop, of course I did whatever I could to ensure I could work, presuming we are otherwise safe.

First, I purchased a myCharge device, which will let us charge our cell phones up to three times without using the car charger. Then, of course, I made sure my data was backed up both on a portable flash drive that I will carry with me but also in the cloud. I also purchased a converter that will let me charge my netbook via the car's battery

To ensure data is available, I backed it up to two personal cloud sites, Dropbox and Microsoft's SkyDrive. That is an approach I wouldn't have done in the past but given the number of highly publicized outages that Amazon Web Services (which had one just last week), Microsoft, Google and others have experienced, I believe redundancy greatly increases the likelihood of data availability.

Many are still reluctant to use cloud services to back up their personal files and I admit I have had my reservations. But I have come to the conclusion that the risk of anyone accessing my data is far less probable than the threat of losing files and photos if a catastrophe were to strike. And businesses need to think in the same way, while taking the appropriate measures to secure sensitive data.

How has Hurricane Sandy changed your thinking or use of cloud services both personally or for business critical data? Comment below or e-mail me at [email protected].

Posted by Jeffrey Schwartz on 10/30/20124 comments

At its annual Cloud Innovation Forum here in New York, IBM this week emphasized Platform as a Service (PaaS) as the next frontier for enterprise cloud computing.

IBM spent much of the one-day event, attended by 100 customers and 200 other stakeholders including business partners, talking up PaaS. The company's PaaS offering, called SmartCloud Application Services (SCAS), is available in pre-release form for customers of Big Blue's existing infrastructure as a service (IaaS) offering, SmartCloud Enterprise (SCE) and it will be generally available later this quarter.

Only a small but incrementally growing percentage of enterprises have started using PaaS for production oriented applications, according to industry analysts. Moreover there are a number of companies with various PaaS offerings and shops are evenly split between the various services they envision using, according to IDC senior VP and chief analyst Frank Gens, who gave a presentation at the IBM event. Among the widely preferred PaaS services are Google App Engine, Microsoft's Windows Azure, IBM's SmartCloud PaaS, VMware Cloud Foundry, Salesforce.com's Force.com, NetSuite SuiteCloud, the Intuit Partner Platform and Red Hat OpenShift, according to an IDC study.

IBM used its event to release the results of a study it conducted based on its own survey of 1,500 IT decision makers in 18 countries (both mature and growth markets) and found that the need to manage proliferating Big Data is a key driver of organizations that are either looking at or going all-in on PaaS.

Driving Big Data is social media, mobile device usage and data analytics and integration. Customers "are starting to think about how they move Big Data activity onto the cloud and the applications needed to manage that," said Kevin Thompson, manager of IBM's Center for Applied Insights, who presented the results of the survey to a group of journalists.

IBM concluded there are four types of PaaS users: Pioneers, those that are creating apps that enable new business capabilities using PaaS, which account for 16 percent of the sample; Experimenters, shops that are dipping their toe into PaaS by attempting to take an existing app or business process and move it to the cloud, amounting to 12 percent; Preparers who plan to start using PaaS were 12 percent, while 39 percent were Observers sitting on the sidelines for now.

I asked Jim Comfort, VP of IBM's SmartCloud Strategy, what percentage of IBM shops he believes are using PaaS? "This is a multi year journey," Comfort responded. "I think almost every client has one or two teams or projects that they experimenting with in this new category. Everyone is trying to find ways to play. As far as the mainstream, I would expect 25 percent over the next several years will shift their development [toward PaaS] once they find a set of tools that do what they really want to do. That's just my guess."

IBM's new PaaS services consist of application patterns, layers that run on top of its IaaS cloud and existing customers can use those accounts to develop and deploy apps on SCAS via the SCE portal. IBM describes these patters as pre-defined software components designed to expedite the development and deployment of cloud-based apps based on multiple pre-defined architectures incorporated from decades of customer and partner engagements.

"It provides the tools to greatly simplify the development of Web applications using the infrastructure to hide all the complexity," Comfort said. The initial offering is targeted at Web application services, in the pipeline are others including database services, mobile and analytic services, he said.

The first services made available on the new offering are the Collaborative LifeCycle Management and Workload Service. The Collaborative Lifecycle Management services lets development managers add users and roles through the portal, which allows for monitoring and ensuring data availability. It utilizes IBM's Rational developer tool suite enabling the application development lifecycle of tracking, designing, implementing, building, testing and deploying apps. The service allows team-based application development lifecycle via Rational Team Concert, Rational Requirements Composer and Rational Quality Manager. Pricing will be announced at GA but IBM will bill customers on a per-user, per-month basis.

Workload Service is aimed at replacing premises-based middleware, notably WebSphere in IBM shops. It provides policy-based automated scaling and app management via the Workload Deployer, which IBM calls the "brains" of the service. Developers can deploy virtual systems, the traditional model for deploying and connecting VMs or the newer virtual applications, which the developer deploys the actual apps.

Like IBM's core applications, it is designed for Java-based apps, though IBM's Comfort said the company plans to support other popular languages including Ruby, PHP and Microsoft's .NET. The new PaaS offering also offers Web Applications Services (WAS), virtual databases, virtual system patters and Java app platforms. Customers will pay a usage-based hourly or monthly rate.

Comfort emphasized with IBM's approach to PaaS, compute and app infrastructure run in the cloud while data remains on premises. That matters, he said "because applications and data prove to be some of the most strategic assets a company has."

Posted by Jeffrey Schwartz on 10/18/20120 comments

Microsoft's deal to acquire StorSimple Tuesday for an undisclosed amount fills a key hole in Redmond's effort to offer enterprises its so-called Cloud OS.

StorSimple is a three-year old provider of cloud integrated storage (CIS) appliances that allow those who manage datacenters to add public cloud services to the storage tier of an enterprise network. By using cloud services for data storage, StorSimple argues companies can offer improved disaster recovery, while lowering total cost of storage ownership by 60 to 80 percent.

The CIS storage appliances extend SAN snapshots, primary storage, backup and archive data to cloud-based services from Amazon Web Services, Google, IBM, Nirvanix and EMC's Atmos storage running in AT&T's public cloud as well as OpenStack services from Dell, HP, and IBM and of course Microsoft's Windows Azure.

"A lot of mainstream enterprise IT customers are choosing Windows Azure with StorSimple," said co-founder and CEO Ursheet Parikh in a short pre-recorded video discussion embedded in a blog post by Michael Park, corporate VP for Microsoft's server and tools business.

Does that mean once StorSimple becomes part of Microsoft that it will only use Windows Azure as a cloud target? A Microsoft spokeswoman would only say: "As a result of this announcement nothing changes. We have no additional information to share at this time."

StorSimple says its redundant disk controller ensures high availability and no single point of failure, while enabling non-disruptive software upgrades. The appliances include an application optimization plug-in architecture that provides plug-ins for individual files, virtual machine libraries and client devices as well as SharePoint and Exchange. It's also certified for Windows Server and VMware infrastructures.

Posted by Jeffrey Schwartz on 10/18/20120 comments