Google is adding an extra layer of security for developers building applications that access a variety of its server-side platform services.

The company's new Service Accounts, launched Tuesday, will provide certificate-based authentication to Google APIs for server-to-server interactions. Until now, Google secured its APIs in these scenarios via passwords or shared keys.

"This means, for example, that a request from a Web application to Google Cloud Storage can be authenticated via a certificate instead of a shared key," said Google product manager Justin Smith, in a blog post, noting that unlike passwords and shared keys, certs can't be guessed.

In addition to Cloud Storage, Google's Prediction API, URL Shortener, OAuth 2.0 Authorization Server, APIs Console and its API libraries for Python, Java and PHPs will support certificates. Other APIs and client libraries, including Ruby and .NET, will follow over time, according to Smith.

The certs are implemented as an OAuth 2.0 flow. An app using the certificate service generates a JSON structure, which is signed with a private key and encoded as a JSON Web Token (JWT). Once the JWT accesses Google's OAuth 2.0 Authorization Server it provides an access token, which is sent to Google Cloud Storage or the Prediction API.

Adding certificate-based authentication will be welcome to those who require better security than passwords and shared keys offer, said Forrester Research analyst Eve Maler. "Government agencies and other high-risk players generally demand certificate-based authentication," she said. "This decision by Google enables, for its service ecosystem, this stronger option for those who need it. Google has experimented rapidly to come up with maximally effective API security mechanisms."

Developers can set up Service Accounts on the Google APIs Console.

Posted by Jeffrey Schwartz on 03/21/2012 at 1:14 PM0 comments

One year has passed since Hewlett-Packard announced its plans to launch a public cloud service and it appears that service will arrive in May.

Zorawar Biri Singh, senior vice president and general manager of HP's cloud services business last week told The New York Times that the service is on pace to go online in two months. While HP is launching a portfolio of public cloud infrastructure services similar to those of Amazon Web Services, Singh is setting modest expectations for taking on the behemoth.

"We won't pull (Amazon's) customers out by the horns but we already have customers in beta who see us as a great alternative,"Singh said, adding HP does not intend to compete on price. Fully aware that Amazon has aggressively cut its rates, Singh said HP will compete by offering more "personal sales and service."

Of course, HP won't stand alone on that front as players such as Rackspace, IBM and Microsoft, just to name a few, promote their focus on customer service. That said, HP can't afford not to offer a viable public cloud service for enterprises. Along with its public cloud service, Singh said HP will offer:

- Tools for Ruby, Java and PHP developers

- Support for provisioning and management of workloads remotely

- An online store where customers can rent software in HP's cloud (as indicated last year)

- Connectivity to private clouds

- A platform layer with third party services

Offering software as a service also appears high on the agenda. The first will be a data analytics service, leveraging last year's acquisitions of Vertica and Autonomy.

HP's cloud services will launch initially with datacenters in the United States on both the east and west coasts, with a global rollout to follow. Like any major IT vendor, HP knows it must execute well in the cloud. Even if it isn't a major revenue generator in the short term, a robust cloud portfolio will be critical to HP's future.

Posted by Jeffrey Schwartz on 03/15/2012 at 1:14 PM0 comments

It was bad enough that Microsoft's Windows Azure cloud service was unavailable for much of the day on Feb. 29 thanks to the so-called Leap Day bug. But customers struggled to find out what was going on and when service would be restored.

That's because the Windows Azure Dashboard itself wasn't fully available, noted Bill Laing, corporate VP of Microsoft's Server and Cloud division, in a blog post Friday, where he provided an in-depth post-mortem with extensive technical details outlining what went wrong. In very simple terms, it was the result of a coding error that led the system to calculate a future date that didn't exist.

But others may be less interested in what went wrong than in how reliable Windows Azure and public cloud services will be over the long haul. On that front, Laing was pretty candid, saying, "The three truths of cloud computing are: hardware fails, software has bugs and people make mistakes. Our job is to mitigate all of these unpredictable issues to provide a robust service for our customers."

Did Microsoft do enough to mitigate this issue? Laing admits Microsoft could have done better to prevent, detect and respond to the problems. In terms of prevention, Microsoft said it will improve testing to discover time-related bugs by upgrading its code analysis tools to uncover those and similar types of coding issues. The problem took too long -- 75 minutes -- to detect, Laing added, noting the specific issue regarding detecting fault with the guest agent where the bug was found.

Exacerbating the whole matter was the breakdown in communication. The Windows Azure Service Dashboard failed to "provide the granularity of detail and transparency our customers need and expect," Laing said. Hourly updates failed to appear and information on the dashboard lacked helpful insight, he acknowledged.

"Customers have asked that we provide more details and new information on the specific work taking place to resolve the issue," he said. "We are committed to providing more detail and transparency on steps we're taking to resolve an outage as well as details on progress and setbacks along the way."

Noting that customer service telephone lines were jammed due to the lack of information on the dashboard, Laing promised users will not be kept in the dark. "We are reevaluating our customer support staffing needs and taking steps to provide more transparent communication through a broader set of channels," he said. Those channels will include Facebook and Twitter, among other forums.

Windows Azure customers affected by the outage will receive a 33 percent credit, which will automatically be applied to their bills. However, such credits, while welcome, rarely make up for the cost associated with downtime. But if Microsoft delivers on Laing's commitments, perhaps the next outage will be less painful.

See Also:

Posted by Jeffrey Schwartz on 03/14/2012 at 1:14 PM1 comments

Once again, Amazon Web Services said it is cutting the price of its cloud offerings, including its Elastic Compute Cloud (EC2), Relational Database Service (RDS) and Elastic MapReduce (EMR) offerings.

This latest reduction marks the 19th time Amazon has cut prices of its cloud services in the six years since launching them. Just last month, Amazon reduced pricing for its Simple Storage Service (S3) and Elastic Block Storage (EBS) offerings.

"Driving costs down for our customers is part of the DNA of Amazon and therefore also part of the DNA of AWS," said Amazon CTO Werner Vogels in a blog post. "We will continue to drive AWS prices down, even without any competitive pressure to do so. And we will work hard to do this across all the different services."

The cuts will amount to a 6 percent savings for usage of the On-Demand version of EC2 and 33 percent for its Reserved Instance offerings. For RDS, Amazon is reducing On-Demand prices by up to 10 percent and Reserved Instances by up to 42 percent. AWS evangelist Jeff Barr provides a complete rundown in a blog post.

While Vogels may say there's no competitive pressure to lower Amazon's prices, it comes just two weeks after Microsoft announced it is lowering prices for some of its SQL Azure service and just months after simplifying prices for Windows Azure. Google this week also lowered its cloud storage pricing, PCWorld reported.

Nevertheless, here's Vogels' reasoning:

"Reducing pricing is not just a matter of passing on the benefits of economies of scale, although that certainly plays a role," he writes. "Experiences with the highly scalable, ultra-efficient supply chains of Amazon.com drive great new innovations in the highly redundant supply chains for AWS, which lead to new efficiencies that we can pass on to our customers. Also on the business model side, we continue to innovate, as the introduction of Reserved Instances and Spot Instances have helped customers make significant savings."

Is Amazon upping the ante -- or should I say lowering the ante -- for cloud service pricing? Leave a comment below or drop me a line at [email protected].

Posted by Jeffrey Schwartz on 03/08/2012 at 1:14 PM1 comments

One of Google's largest cloud partners is now even larger. Cloud Sherpas, a major provider of Google Apps, this week said it has merged with GlobalOne, making it a major Salesforce.com partner, as well.

Since its founding in 2008, Atlanta-based Cloud Sherpas has focused its business on replacing premises e-mail and collaboration platforms with cloud services based on the Google Apps stack. New York-based GlobalOne has concentrated on offering CRM services from Salesforce.com since its formation in 2007.

GlobalOne CEO David Northington will now be CEO of Cloud Sherpas, while former Cloud Sherpas CEO Douglas Shepard will become president of Cloud Sherpas' Google business unit.

"Both GlobalOne and Cloud Sherpas were born in the cloud as pure-play cloud service providers," Northington said in a statement. "The combined firm further enables our singular mission -- to help customers transform their businesses by leveraging the power of the cloud. The new Cloud Sherpas has the talent, domain expertise and geographic reach to help businesses improve IT agility and lower costs through a series of cloud consulting, integration and support services."

And giving Cloud Sherpas a further boost, Columbia Capital, which last year invested $15 million in the provider, is adding a $20 million round. Cloud Sherpas said it intends to use the funds to expand into new geographic regions, add to its portfolio of vertical market offerings and extend its cloud-based applications.

The new Cloud Sherpas employs 300 people and has a presence in Atlanta, Ga.; Brisbane, Australia; Chicago; Manila, Philippines; New York; San Francisco; Sydney, Australia; and Wellington, New Zealand.

Is it only a matter of time before a major provider scoops up Cloud Sherpas? Northington told The New York Times Cloud Sherpas is targeting its own growth to offer more cloud-based services and plans to broaden its portfolio.

Posted by Jeffrey Schwartz on 03/08/2012 at 1:14 PM0 comments

I have yet to talk to a cloud provider that doesn't emphasize the security of its services. So it should come as no surprise that when I talked to executives overseeing IBM's cloud efforts last week at the company's Partnership Leadership Conference in New Orleans, security was a critical part of the conversation.

IBM's cloud security framework brings together assets from its Tivoli security software business and Rational development tools unit, as well as its identity management and data security approaches embedded into its servers, storage and various software offerings ranging from development tools to middleware and its business analytics software.

But when it comes to SmartCloud Enterprise, the company's public cloud Infrastructure as a Service (IaaS), IBM's approach to security begins with a simple prerequisite: You must be a known customer before it will host your data, explained Rich Lechner, vice president of cloud for IBM's Global Technology Services business.

"An individual can't simply sign up with a credit card," Lechner said. "The reason being, in a multitenant environment like that, part of the security model says you have to know who else is in the building. "A lot of other cloud providers don't offer that level of security. They are not managing or ensuring the identity of the other tenants."

Lechner acknowledged that this extra process has a downside. "It slows the on-boarding process because you now have to validate the client, but we believe, and our clients believe, that the benefits in terms of security outweigh the negatives of the slower on-boarding process."

Lechner also said IBM is using cloud technologies to power its global Security Operations Center (SOC), which he said tracks 5 billion security events daily. Hundreds of researchers who collaborate via this cloud discover between 50 and 300 brand-new, never-before-seen threats worldwide, Lechner said. They assess, identify and resolve those threats and provide inoculations and distribute them to IBM's 2,000 managed services clients, usually within 24 hours. Known threats are inoculated typically within minutes, according to Lechner.

"It's an interesting utilization of the cloud, in the sense that it leverages the processing, analytical and global reach."

Posted by Jeffrey Schwartz on 03/05/2012 at 1:14 PM1 comments

Rackspace's acquisition of SharePoint911, announced last week, is intended to further the company's reach as a provider of cloud-based SharePoint implementations. But in a departure for the hosting and cloud services provider, the move also aims to give Rackspace a stake in supporting premises-based deployments of SharePoint.

Unusual as that might sound, the acquisition of SharePoint911, a leading boutique firm of Microsoft MVPs specializing in Microsoft's collaboration platform, is the latest step in Rackspace's effort to expand from a provider of pure hosted services to one that offers hybrid cloud offerings by adding support for installations running in customers' datacenters.

That transition began with Rackspace's widely publicized OpenStack project, aimed at letting customers use the open-source platform from Rackspace or other vendors supporting the project to deploy private clouds in their datacenters, explained Jeff DeVerter, a SharePoint architect at Rackspace. With OpenStack, the company has rolled out Rackspace Cloud: Private Edition and most recently tapped partner Redapt to help customers build private clouds based on the Rackspace implementation of OpenStack.

"Our tagline here is 'Fanatical Support.' We now are talking about 'fanatical anywhere' where we will reach out of our datacenter," DeVerter said in an interview. "First was to support OpenStack out of [customers'] datacenters. Now being able to support SharePoint wherever it might be ultimately will drive SharePoint adoption because we believe SharePoint is here to stay. It's what customers are using and we want them to be successful in bringing in this level of capability, which is what was required."

Rackspace entered the business of offering managed SharePoint services back in 2008. The idea at the time was to get more people to use Rackspace to host their SharePoint servers. The hosting provider handles configuration and installation of SharePoint and provides central administration. Rackspace also will create all the major SharePoint containers and ensure that a farm is running well. But if an end user didn't know how to upload a document or a customer wanted to create custom apps, that fell out of Rackspace's realm, according to DeVerter.

Customers fail to use SharePoint to its full potential, he argues, and as a result, Rackspace saw an opportunity to expand its SharePoint business to address such areas as application development and end user training. That means supporting SharePoint installations that run both inside its hosting facility and in customers' (or even other providers') datacenters.

"We will get into supporting stuff outside of our datacenter," DeVerter said. "That's obviously a business-changer for us at RackSpace as we have primarily only done work that has been inside any of our datacenters that are around the world. SharePoint911 has built their business on really helping get out of problems and work inside of SharePoint, regardless of where they are. They have supported some of our customers in our datacenter, and they've got people that they support all around the world in other datacenters or in their own company's datacenters. So we will continue that model. It will take some learning on our part to know how to do that well, but we are committed to having the best SharePoint experience for our customers, whether that's inside our datacenter or outside."

I spent some time chatting with DeVerter, who was joined by SharePoint911 founder Shane Young, about the deal. Here are some edited experts from that conversation:

Is Office 365 a threat?

DeVerter: The reality is, Office 365 is one of the best things that's happened to SharePoint because it really takes that SharePoint 2010 experience and makes it very accessible to people that require a low-cost-of -entry environment. But the problem still ends up that people need help getting moved into SharePoint and making it a compelling solution to transform their business. SharePoint911 today has a lot of customers who run inside of the Office 365 space, and one of their MVP consultants, Jennifer Mason, is a strong contributor to the knowledge base inside of Office 365 on how to be successful in that area. We could expect to continue to assist from the support perspective in Microsoft's datacenter for Office 365.

Are typical Office 365 customers those who already have SharePoint premises-based deployments?

DeVerter: My experience has been they aren't. They are either smaller SMBs or large enterprise customers but more of a department inside of there who are trying out SharePoint and trying to solve a specific scenario. The reason we don't see Office 365 as a threat is because people will learn about SharePoint and the things it can do and how it transforms their business but Office 365, as a fully integrated enterprise solution, really isn't there yet at this point. And so as customers grow up in SharePoint, often times we find them coming to Rackspace. From a historical perspective, we are taking those Office 365 customers to the next level as they grow up and out. So with the addition of SharePoint911, we will be supporting Office 365 customers.

Young: We haven't seen anyone in our customer base that has taken an existing internal deployment and moved it into Office 365.

Are those that are using Office 365 using it as a test bed to get into a premises-based version of SharePoint?

Young: A lot of people are just kicking tires. I don't know where they will end up yet but I do see it as an opportunity to figure it out at a low price point. Where they go from there, as they grow up and mature in what they want to do with it, is something we will start figuring out in the next couple of months, from the wave of people we've seen. I think a lot of the people who have adopted Office 365 are still in that exploration phase. They haven't been in it long enough to decide if it's a permanent solution for them or if they're going to need to grow into something else.

How do you see it playing out?

Young: I think they will outgrow Office 365 as it stands today. It gets you that core collaboration functionality but we've got a lot of people standing up public Web sites on SharePoint, we've got people who want to do BI solutions on SharePoint and Microsoft sees that. But right now, today, if you want to do anything more than straight-up collaboration, it's a challenge in Office 365.

What made you decide to become part of a large organization rather than maintain the boutique operation that you had?

Young: I had been involved with Rackspace since Jeff came on board. I did the original training on how to stand up and deploy SharePoint. I helped Jeff architect the solution there and it's really a company that I have believed in all along. It really speaks volumes. The other part of it is the entrepreneur in me. I've been the guy running the ship for almost seven years now. They have a lot of ideas, a lot of directions that I wanted to take that we've never been able to do because we were privately funded. Now that we are part of Rackspace, there's a laundry list of services that we can offer to the SharePoint world that barely exists today. It will give me a chance to really run with some of those ideas I've had over the years.

Can you describe those ideas?

Young: Not at this point. I'm holding that close to the vest until we plan on how we're going to do some of those things.

Understood. How quickly do you see them coming together?

Young: The sooner the better for me. This year.

Is it your understanding that all six of your MVPs will stay on board?

Young: Everyone has come over. The team is actually very excited to be on a bigger stage to do more.

See Also:

Posted by Jeffrey Schwartz on 02/27/2012 at 1:14 PM1 comments

When it comes to policies that promote growth of cloud computing among the world's leading users of information technology, Japan comes out on top and the United States fourth, according to a report published this week by the Business Software Alliance.

But a lack of consistency in laws and economic polices among the 24 countries that account for 80 percent of the world's information and communications technology is putting the promise of a robust global cloud marketplace at risk, based on a BSA study that is the basis of its first Global Cloud Computing Scorecard (report .PDF here).

"In a global economy, you should be able to get the technology you need for personal or business use from cloud providers located anywhere in the world," said BSA President and CEO Robert Holleyman in a statement. "But that requires laws and regulations that let data flow easily across borders. Right now, too many countries have too many different rules standing in the way of the kind of trade in digital services we really need."

Japan ranked first based on its comprehensive privacy policies that don't interfere with commerce, strong IP protections and criminal enforcement, a strong IT infrastructure and its leadership in developing standards, according to the report. In addition to the U.S., Australia, France and Germany ranked in the top five. Developing nations Brazil, China and India were at the bottom of the list.

Though countries in Europe scored well, the BSA said the European Union's proposed Data Protection Regulation could hamper the growth of cloud computing.

The BSA pointed to seven areas that countries need to address in creating policies that will ensure the growth of cloud computing:

- Privacy: Users need to be assured that their information won't be inappropriately used. Still, cloud providers need to be able to move data through the cloud efficiently.

- Security: Cloud providers must ensure security of data without having specific technologies mandated.

- Battling cybercrime: There must be adequate mechanisms for enforcement of laws and policies in place that enable cloud providers to contend with unauthorized access to data in the cloud.

- IP protection: Surely the most controversial of the seven, IP laws must provide "clear protection and vigorous enforcement" against infringements enabled by the cloud, the BSA says.

- Ensuring portability: Also sure to spark debate is the contention that governments should work with the industry to develop open standards that would enable interoperability, while at the same time putting legal requirements on cloud providers.

- Free trade: Governments should foster an open marketplace that reduces barriers to free trade such as imposing preferences toward specific technologies or vendors.

- Building IT infrastructure: Governments should provide incentives to cloud providers that promote widespread availability of broadband networks.

"Cloud computing is a technological paradigm that is certain to be a new engine of the global economy," according to the report. "Attaining those benefits will require governments around the world to establish the proper legal and regulatory framework to support cloud computing."

The BSA, backed by heavyweights such as Apple, Adobe, CA, Intel, Technologies, Microsoft, Quest Software and Symantec, now appear to be applying more pressure to lift barriers they see impeding the growth of cloud computing, particularly in developing nations.

Despite the merits of some of the BSA's end goals, some of its proposals, not surprisingly, won't sit well with opponents of regulation. On the other hand, the study puts an added spotlight on some prevailing issues such as interoperability, security and availability -- all legitimate obstacles to cloud computing adoption worldwide.

Political realities notwithstanding, providers in their own right have an interest in continuing their quest to address the existing barriers to cloud computing. The question is, which will make it happen faster -- more regulation or the status quo? And regardless of expediency, which approach will create the most suitable and sustainable cloud computing environments for consumers and business users?

Does the BSA's report appear to offer legitimate ammunition to lift barriers to cloud computing or does it look more like a heavy-handed effort to compel a more highly regulated environment? And even if the latter is the case, would such an outcome be more conducive to reducing the barriers to cloud computing adoption, or will those obstacles ultimately be lifted under the current environment? Drop me a line at [email protected] or leave a comment below.

UPDATE, 2/28: I received an e-mail from BSA Technology Policy Counsel Chris Hopfensperger, who emphasized that the conclusion of BSA's report is not that it is looking to necessarily impose more regulation, but rather that existing regulations across the globe must not be in conflict with one another. Coordination of such regulations is paramount, as underscored at the beginning of this post.

"The central finding of BSA's Global Cloud Computing Scorecard is that an international patchwork of conflicting laws and regulations threatens to prevent the cloud from reaching truly global scale," Hopfensperger said. "To capture its full economic potential, BSA advocates harmonizing existing laws and regulations -- not necessarily piling on new ones. We would support new policies where no legal structure currently exists. Brazil is a good example of this. It is one of the few countries that has not adopted the Cybercrime Convention. You might argue that adopting it would mean more regulation, but our view is it would create a better business environment by establishing legal certainty that is more in keeping with international norms."

Hence, the revised headline of this post; I changed the original "More" to "Consistent."

Posted by Jeffrey Schwartz on 02/23/2012 at 1:14 PM0 comments

Dimension Data on Thursday is launching a public cloud offering that it says will compete with Amazon Web Services and Rackspace Hosting, among others.

The $5.8 billion global systems integrator and technology services provider is also launching private cloud implementation services, hybrid cloud offerings and a managed hosting service.

Johannesburg, South Africa-based Dimension Data, a subsidiary of NTT Group, had been signaling for some time that it planned to extend its cloud offerings, and it took a major step forward last summer with its acquisition of OpSource, which provides cloud hosting, automation and management services.

With operations in Santa Clara, Calif., Virginia, the United Kingdom, Ireland and India, OpSource already had a formidable cloud offering. At the time of the acquisition, Dimension Data said that OpSource would be the cornerstone of its new Cloud Business Unit. Now Dimension Data is extending that globally through its datacenters in Asia, Australia and Africa as well as regions complementary with OpSource. Dimension Data said it plans to have its global footprint rolled out by the end of the next quarter.

OpSource currently has 400 cloud clients, most of them in North America. "Our clients are in various stages of cloud adoption," said Jere Brown, CEO of Dimension Data Americas, in an interview. "OpSource has let us accelerate that journey and we will be leading that."

Brown said the company has aspirations of becoming a major provider of cloud services. While there is no shortage of providers of Infrastructure as a Service (IaaS), officials at Dimension Data said they are primed to compete with Amazon and Rackspace. "We will have one of the largest public cloud footprints in the industry," said Keao Caindec, CMO of Dimension Data's Cloud Business Unit.

The company's cloud portfolio is based on systems provided by Dell, EMC, Cisco and VMware. It is not based on VMware's vCloud platform, said Dimension Data Americas COO Wes Johnston, but rather on its virtualization technology and Dimension Data's own custom-developed cloud management and orchestration platform it calls CloudControl.

In addition to its public cloud service, Dimension Data is now deploying private clouds for customers in their own datacenters, where the company will provide infrastructure, implementation, cloud orchestration and automation, all managed by the company.

For those that don't want private clouds in their own datacenters, Dimension Data's new hosted private cloud service will be run in the company's facilities. Third-party service providers can also use Dimension Data's offering to provide their own branded, managed cloud services.

The cloud portfolio also includes managed hosting, consisting of dedicated infrastructure and application management services linked to Dimension Data's public and private clouds. Through the managed hosting offering, Dimension Data will manage apps, databases, networks and virtual and physical servers.

A managed services option will include a variety of services including patch management, configuration of hardware and backup. And Dimension Data said through its Managed Cloud Platform, it will offer cloud-based backup and disaster recovery and the hosting of applications.

Posted by Jeffrey Schwartz on 02/23/2012 at 1:14 PM0 comments

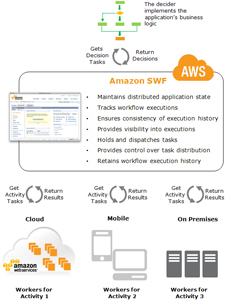

Looking to simplify the development and management of business critical applications distributed across its public cloud and customers' private data centers, Amazon Web Services on Wednesday launched a workflow service that coordinates all of the processing procedures within an app.

Amazon said its new Simple Workflow Service (SWF) is aimed at reducing the time-consuming process of building applications such as those that automate business processes for, say, running financial systems, conducting data analytics or managing cloud infrastructure services. SWF coordinates the tasks and manages their execution dependencies, scheduling and concurrency in conjunction with the application logic associated with that task, according to Amazon.

Furthermore, Amazon SWF "reliably coordinates all of the processing steps within an application," the company said.

"Amazon SWF is an orchestration service for building scalable distributed applications," said Amazon CTO Werner Vogels in a blog post. "Often an application consists of several different tasks to be performed in particular sequence driven by a set of dynamic conditions. Amazon SWF makes it very easy for developers to architect and implement these tasks, run them in the cloud or on premise and coordinate their flow."

Vogels added that an increasing number of apps have started to rely on asynchronous and distributed processing and scalability is a key requirement. "By designing autonomous distributed components, developers get the flexibility to deploy and scale out parts of the application independently as load increases," he noted.

Among those that test SWF prior to its release were NASA Jet Propulsion Lab (JPL), which is using it to incorporate distributed resources both internally and externally to allow apps to scale dynamically; Sage Bionetworks, a nonprofit biotechnology research organization that is using SWF as part if its computation platform to process molecular and clinical data sets; and cloud management vendor RightScale, which is using SWF to build infrastructure management capabilities more rapidly.

"Using Amazon SWF, we are able to reduce the time to market for our higher level infrastructure automation features," said RightScale CTO Thorsten von Eicken in a statement. "We are able to focus on our value-add without having to worry about the challenges that are associated with implementing a distributed workflow engine. In the end we are able to ship new features faster and don't have to concern ourselves with maintaining that engine."

Amazon published a detailed rundown of how SWF works and a description that lets developers start building SWFs. Below is a diagram encapsulating SWF:

[Click on image for larger view.]

|

| Source: Amazon |

Posted by Jeffrey Schwartz on 02/22/2012 at 1:14 PM0 comments

Rackspace Hosting is getting deeper into the SharePoint consulting business. The hosting and cloud provider on Thursday said it has acquired SharePoint911, a well-regarded boutique firm that provides enterprises with SharePoint consulting, custom development, design, implementation and training services.

Six of its consultants are Microsoft MVPs who have authored numerous books and are frequent speakers at industry events. Rackspace first started offering managed SharePoint services in 2008. The acquisition of SharePoint911 gives Rackspace a staff of experienced SharePoint consultants who have architected numerous SharePoint projects for customers.

Founded in 2004 by Shane Young, Cincinnati-based SharePoint911 was an early Technology Adoption Partner (TAP) for SharePoint 2010 prior to its release and was working on numerous engagements for the current release. SharePoint911 was listed as one of the top MVPs by Redmond Channel Partner last year.

|

| Shane Young |

In a blog post announcing the deal, Young indicated he welcomed the resources of a large provider such as Rackspace. "SharePoint911 was founded on the principle that the complexities of SharePoint should not limit the solution's success within an organization," Young said. "With the support of Rackspace, we look forward to continuing that mission and spreading our capabilities within Fanatical Support to our current and future customers."

Rackspace CTO John Engates said in the blog post that SharePoint911 would extend the company's application delivery service capabilities. "SharePoint911 will add the talent and expertise to our teams to make SharePoint deployments easier for our customers while increasing their scalability and performance through better integration of the workload and infrastructure," Young said.

It is not clear whether Rackspace intends to focus its SharePoint consulting offering on managed and cloud-based implementations or whether it will have its consultants continue to work on premises-based engagements. Officials at Rackspace and SharePoint911 did not immediately respond to requests for comment. Terms of the acquisition were not disclosed.

Posted by Jeffrey Schwartz on 02/16/2012 at 1:14 PM0 comments

When Rackspace launched Rackspace Cloud: Private Edition last year, the goal was to allow enterprises to build clouds based on OpenStack in their own datacenters or in collocation facilities of their choices. But Rackspace has determined that some customers, while they like the idea, don't want to do it themselves.

Its remedy is a partnership announced Wednesday with Redapt, a solution provider with expertise in OpenStack that deploys private clouds. Rackspace has established Redapt as its partner of choice for such work, due to its work with such companies as Zynga, Citigroup and Red Hat. Redapt is also an established Dell partner.

Redapt will configure and install hardware equipped with the open source OpenStack cloud computing, storage and networking platform. The integrator will provide the implementation, testing and configuration at its various MergeCenters located in North America and Europe. Then Redapt will ship the hardware to the customer's datacenter or collocation facility and subsequently offer around-the-clock operations support.

I asked Jim Curry, general manager of Rackspace Cloud Builders, if this was the first of many partners that the company would be announcing. But it seems as though Redapt will be the only one for the foreseeable future.

"I don't anticipate we need other partners today," Curry said. "We intend to continue to expand our offering and my guess is they will want to expand with us. We are very happy and think they can meet the demand and the needs we see from customers today."

In other words, there won't be a broad ecosystem of SIs deploying and managing Rackspace Cloud Builders, though Curry didn't rule out offering customers other options or the possibility of giving more partners the opportunity to offer private clouds from Rackspace. But it doesn't seem to be in the cards anytime soon.

Posted by Jeffrey Schwartz on 02/15/2012 at 1:14 PM0 comments